Although AI has moved fast, many organisations haven’t. Most leaders we speak to aren’t short on ideas, proofs of concept, or vendor demos. The challenge is turning AI into something repeatable, a capability you can trust, scale, and steer without creating new risks or bottlenecks.

That gap is the starting point for this conversation between Romano Roth, Prof. Dr. Oliver Gassmann, and moderator Prof. Dr. Naomi Haefner. They explore what agentic AI actually means for the enterprise, where it works today, and what organisations need to change before they can scale it.

The discussion surfaces a productive tension. Oliver, drawing on his research article “The Non-Human Enterprise”, pushes the idea of fully autonomous, agent-driven organisations as a radical thought experiment. Romano argues that real enterprises are socio-technical systems that need human-led steering, feedback loops and continuous adaptation. Where they converge is just as revealing as where they disagree.

Meet the speakers#

Romano Roth is the Global Chief of Cybernetic Transformation and a Partner at Zühlke. Romano shapes Zühlke’s global strategy for cybernetic transformation, platform engineering and AI integration.

Prof. Dr. Oliver Gassmann is a Professor of Technology Management at University of St. Gallen and Vice Chairman and Partner at Zühlke. He brings deep expertise in innovation management and the leadership required to make transformation stick.

Prof. Dr. Naomi Haefner is an Assistant Professor of Technology Management at University of St. Gallen. Naomi researches how emerging technologies reshape organisations, decision-making and management practices.

The agentic enterprise: a radical thought experiment#

Oliver defines the agentic enterprise as the ultimate vision of a fully automated organisation: AI agents with described goals and tools that plan, execute, and collaborate, similar to the “dark factories” we already see in manufacturing. He pushes the idea to its extreme: “If we were to design a global insurance company on a green field with just five people, what would it look like?”

Romano brings the counterpoint. While you could build an agentic enterprise for an online shop that sells black socks, a global insurance provider is a different matter entirely. Real enterprises are complex socio-technical systems with feedback loops, regulatory constraints and human judgement at their core. Today’s AI agents reliably handle five to seven steps autonomously, but then a human needs to step in.

This tension between radical automation as a thought experiment and pragmatic, human-led transformation runs through the entire discussion and is what makes it valuable.

Where AI agents deliver value today#

Oliver shares a compelling example from the telecom industry. Autonomous network agents monitor radio access networks and respond to unplanned events, a festival, a traffic jam, a demonstration, in real time.

This innovative approach means that what previously took a team of engineers one hour now happens autonomously in eight minutes and with better performance.

These are what both speakers call “agentic islands”: bounded, well-understood domains where automation delivers clear, measurable gains. The challenge is connecting these islands into end-to-end value streams.

Why most AI initiatives disappoint#

Both speakers are blunt about why so many organisations struggle: they add AI onto existing processes and expect magical results.

According to Romano, the current focus on generative AI tools (which vendor, which LLM) distracts from the real work. “Go away from the tool itself. Think about identifying processes and bottlenecks.” His recommended method is value stream mapping, a technique from the Toyota Production System. This means mapping every step, and analysing process time, lead time, percentage complete and correcting it; and only then designing the future state. Sometimes that future doesn’t even involve AI.

As Oliver points out, most European companies still think of hardware-era strategy cycles. The automotive industry plans around SOP minus three, three years before starting production. But AI frameworks change every three to four months and inference costs drop by 900x per year. Strategy as a plan has to be replaced with creating strategic flexibility.

A framework for deciding what to transform#

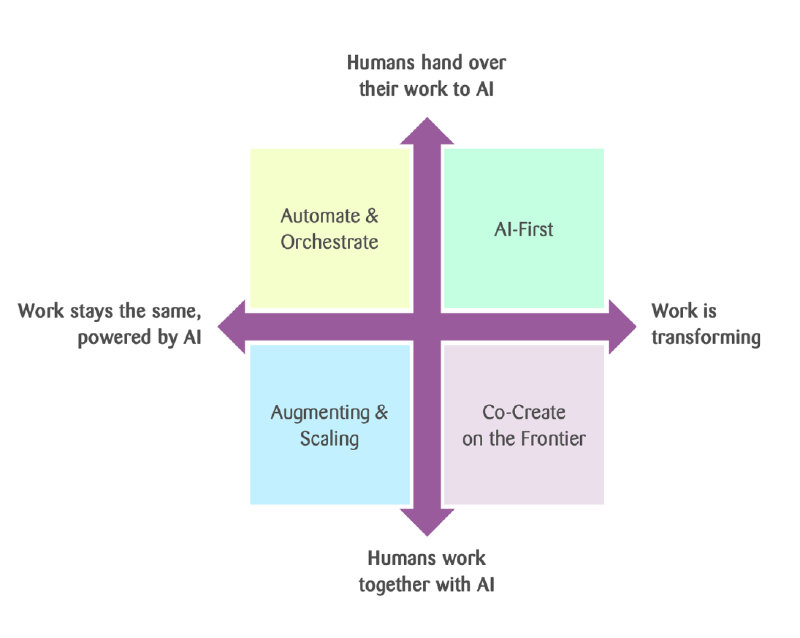

Romano uses a practical two-axis framework when advising companies:

This produces four quadrants that help teams map their current work and decide where to move. The thinking itself, examining every process step and deciding whether to change it, automate it, or leave it, is what most organisations haven’t done yet. Without it, AI is just an expensive addition to a broken process.

Highlights from the conversation#

A consistent theme is that AI can enhance judgement and execution, but it can’t compensate for missing clarity. If the goals aren’t defined, accountability is unclear, or teams don’t have the encouragement to act, AI alone won’t be able to fix it.

Romano illustrates this with a cautionary story. A bachelor thesis student delivered polished, AI-generated slides on Kubernetes certificate management, but couldn’t explain any of the solutions or why one built on another. “Use AI, absolutely, but you need to own the work. You need to understand it,” says Romano. This is a leadership responsibility: ensuring that people take ownership of AI-generated results.

“Start with strategy, tech comes second,” insists Oliver. There are several questions that must be asked before going into the tech, such as: Where do we differentiate? What’s our positioning from a customer perspective?

AI is the second or third step, but it should never be the first. Oliver adds two more preconditions: break down silos into cross-functional teams (because end-to-end solutions require it) and build genuine AI literacy (not just rolling out Copilot and counting logins).

The AI literacy point is practical. Oliver sees executives excited about high adoption numbers, but the actual usage is basic. Real productivity gains require understanding what AI can and can’t do and measuring outcomes instead of only activity.

When systems move from assisting to acting, trust can’t be an afterthought. You need explicit boundaries: what the AI can decide, what must be escalated, what gets logged, and how you detect drift.

Rather than multi-year programmes, both speakers lean into short, instrumented loops. This is the core of the Cybernetic Enterprise approach.

“You need to become a company which senses and learns and continuously adapts. You can’t do any more one-year or two-year or five-year plans.” - Romano Roth

Oliver agrees and reframes it. The ultimate goal is becoming a truly data-driven company. But he’s also realistic. He believes many “AI-first” initiatives quickly turn into data integration projects, because the data simply isn’t there in the right quality.

Human-led, AI-enabled#

Oliver puts a number on the pace of change: even assuming a modest 10x improvement per year in AI capabilities, that’s 10,000x in four years. For any company thinking on a five-year horizon, the implications are enormous. “We will see radically new solutions, things we couldn’t have thought of,” he says.

Romano’s vision for the near future is equally bold: AI will work directly on data, making middleware, APIs, and traditional user interfaces unnecessary. Interaction will be conversational (by either typing or speaking), and the AI will operate on the data directly. “The whole thing about user interfaces, apps, middleware; all this will be gone.”

Both speakers compare this moment to the 1990s, when no one predicted the app economy that would follow. The opportunity space is real, but so is the disruption, particularly for inexperienced workers entering the job market.

What to do next#

Map the value stream. Pick one end-to-end flow. Use value stream mapping to identify bottlenecks: process time, lead time, percentage complete and correct. Design the future state. AI may or may not be part of the solution. Start with efficiency gains; move to the customer journey once you’ve proven the approach.

Set trust boundaries. Be explicit about decision boundaries, escalation paths, monitoring and rollback. The telecom example shows that a guardian agent is essential infrastructure, not an optional add-on.

Build the platform. Standardise patterns for secure data access, model integration, deployment and observability. Build governance into the AI platform so teams can move fast without breaking trust. This is what separates companies that scale from companies that accumulate demos.

AI value doesn’t come from deploying models. It comes from designing an organisation that can learn and deliver in real time, with the right feedback loops, foundations, and governance in place.

This article was originally published on Zühlke Insights.